our next guest: VITÅL SIGNS

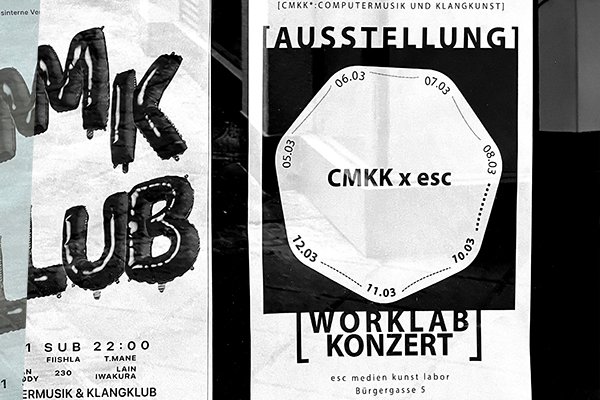

Eröffnung:

Laufzeit:

Face recognition in military hands is not innovation, it’s escalation.

When OpenAI partners with the Pentagon, algorithms move to battlefields.

The algorithm you see on this screen creates bias. It gets confused. It makes mistakes, mistakes that may be artistically interesting, but in the wrong hands become disasters.

Facial recognition systems are known for their inherent biases: they show dramatically higher error rates when identifying women, people of color, and ethnic minorities due to imperfect training datasets. In a context of mass surveillance applied to the theater of war, these algorithmic prejudices can turn into death sentences.

AI errors are not abstract. A false positive in war is not a glitch, it’s a life.

Mass surveillance is not security. It’s control.

Disclaimer:

No large corporate online models are being used.

No internet connection is active.

No images are stored on any server.

Link:

Kooperationen/Team:

Francesco Casanova

Kooperation:

CMKK, in cooperation with esc medien kunst labor

zu Gast im esc mkl:

ComputerMusik and KlangKunst